the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Development of operational decision support tools for mechanized ski guiding using avalanche terrain modeling, GPS tracking, and machine learning

Pascal Haegeli

Roger Atkins

Patrick Mair

Yves Bühler

Snow avalanches are the primary mountain hazard for mechanized skiing operations. Helicopter and snowcat ski guides are tasked with finding safe terrain to provide guests with enjoyable skiing in a fast-paced and highly dynamic and complex decision environment. Based on years of experience, ski guides have established systematic decision-making practices that streamline the process and limit the potential negative influences of time pressure and emotional investment. While this expertise is shared within guiding teams through mentorship, the current lack of a quantitative description of the process prevents the development of decision aids that could strengthen the process. To address this knowledge gap, we collaborated with guides at Canadian Mountain Holidays (CMH) Galena Lodge to catalogue and analyze their decision-making process for the daily run list, where they code runs as green (open for guiding), red (closed), or black (not considered) before heading into the field. To capture the real-world decision-making process, we first built the structure of the decision-making process with input from guides and then used a wide range of available relevant data indicative of run characteristics, current conditions, and prior run list decisions to create the features of the models. We employed three different modeling approaches to capture the run list decision-making process: Bayesian network, random forest, and extreme gradient boosting. The overall accuracies of the models are 84.6 %, 91.9 %, and 93.3 % respectively compared to a testing dataset of roughly 20 000 observed run codes. The insights of our analysis provide a baseline for the development of effective decision support tools for backcountry avalanche risk management that can offer independent perspectives on operational terrain choices based on historic patterns or as a training tool for newer guides.

- Article

(9731 KB) - Full-text XML

- BibTeX

- EndNote

Snow avalanches are a complex and dynamic natural hazard, responsible for an average of approximately 140 recorded fatalities annually in North America and Europe (Colorado Avalanche Information Center, 2024; Jamieson et al., 2010; Techel et al., 2016). The majority of these avalanche fatalities are backcountry recreationists, and the avalanche is commonly triggered by a member of the victim's party (Schweizer and Lütschg, 2001). Terrain selection is the primary tool for managing avalanche risk when traveling in the backcountry. A wide range of factors need to be considered to select appropriate terrain, including current avalanche conditions, slope incline, forest density, aspect, elevation, and potential for overhead hazards or terrain traps. The dynamic nature of avalanche hazard conditions and sheer number of influences on avalanche terrain severity make choosing appropriate terrain challenging.

Due to the complexity of the terrain selection process, there is a long-standing desire to provide recreationists with decision-making aids for making better-informed decisions about when and where to travel in the backcountry. Early tools such as the seminal graphical reduction method (Munter, 1997), the stop-or-go method (Larcher, 1999), the SnowCard (Engler and Mersch, 2001), the NivoTest (Bolognesi, 2000), or the Avaluator (Haegeli, 2010; Haegeli and McCammon, 2007) provided users with relatively simple, analog workflows to combine information on conditions (mainly represented by the danger rating published by an avalanche warning service) with terrain information (primarily slope incline) to assess the severity of different routes. Current trip planning tools such as WhiteRisk (https://whiterisk.ch/, last access: June 2024) or Skitourenguru (https://www.skitourenguru.ch/, last access: June 2024) are modern incarnations of the original approaches that take advantage of recent developments in avalanche terrain modeling to describe the severity of avalanche terrain in more detail. While these tools can be effective for general recreationists, one key challenge for developing more advanced decision-making aids is the scale mismatch when combining public avalanche danger ratings with terrain information. It is the combination of this scale mismatch and the current tools' focus on the public avalanche danger rating that limits their value for more complex decision-making contexts such as professional guiding or advanced amateur recreation. In the case of mechanized ski guiding in Canada, the decision-making process includes an added layer of operational considerations, which further increases complexity.

Based on decades of practical experience, the mechanized skiing industry has developed a structured and iterative process to select terrain that is appropriate for skiing on a daily basis (Israelson, 2015). The decision-making process consists of four major components. First, guides assess current avalanche hazard conditions and produce an avalanche forecast that is relevant for the entire guiding tenure. Second, they create a run list based on a predefined inventory of skiable terrain within the tenure which determines which ski runs are available for guiding based on the current conditions. Based on the run list and operational conditions for the day (e.g., weather conditions, snow quality, skills and preferences of guests, flying logistics), the third step is selecting which ski runs will be used for the day, which is carried out by lead guides in collaboration with the guiding team. The selection of ski runs is an ongoing process throughout the day which can be altered by changing avalanche or weather conditions. Finally, many ski runs contain multiple ski lines with different terrain characteristics and exposure to avalanche hazard. It is the responsibility of the guide of each group to select an appropriate ski line based on the evaluation of slope-scale avalanche conditions, ski quality, and operational considerations.

The practice of creating a daily run list helps guiding teams to get on the same page for the day and establishes a list of potential terrain that has been deemed appropriate for the day's conditions. Individual ski runs can be coded open for guiding (green), conditionally open for guiding (yellow), closed for guiding (red), or not considered (black). A conditionally open run indicates that a specific condition must be met prior to opening the run, which is often determined based on field observations. Black codes essentially represent non-decisions (i.e., default) describing the situation when guides do not think the run is worth discussing during their run coding meeting. The reasons for not discussing a run may include insufficient snow coverage on a run, the run being too far away given current flying conditions, the terrain being obviously too hazardous to consider for current conditions, or too much uncertainty for making an informed decision. Hence, the causes of a run not being coded clearly differ from a run being coded red versus green. In addition, guides' personal references and biases can impact whether a run is coded as black. The process of coding runs during the morning meeting prior to going skiing gives the opportunity for a consensus-based decision process and helps limit emotional and time pressures that can impact decision-making in the field. HeliCat Canada, the association of mechanized skiing operations in Canada, identifies daily run lists as a crucial component and industry standard of avalanche risk management practices (HeliCat Canada, 2024). In mechanized guiding operations snow avalanches account for 77 % of the overall risk of death, and the avalanche fatality rate is approximately 1 fatality in 100 000 skier days (Walcher et al., 2019).

Quantitatively describing the run list coding process in a way that provides insight and offers added value for participating operations requires sophisticated model approaches that can consider the wide range of relevant factors and capture the nuanced nature of these decisions. Prior research has used regression analyses for capturing decision-making processes (Sterchi et al., 2019; Thumlert and Haegeli, 2018), which assumes that the decision to open or close a run can be represented as a linear combination of factors. These approaches provided useful starting points for capturing the complexity of guiding decisions but are limited by the modeling methods. Purely data-driven machine learning methods, such as using self-organizing maps for grouping runs based on run code patterns (Sterchi and Haegeli, 2019), have also shown promise but are prone to detecting spurious relationships, and the black-box nature of the algorithms makes them difficult to understand and trust.

Recent advances in artificial intelligence and machine learning have led to the development of a wide range of different algorithms which show promise for both examining guide decisions in more sophisticated ways and developing meaningful operational decision support tools. Bayesian networks (BNs) offer an attractive alternative to the existing methods due to their ability to use expert knowledge to model complex decision processes (Fenton and Neil, 2019). Decision-tree-based methods, such as random forest (RF) and extreme gradient boosting (XGB), are also attractive for modeling complex decision-making tasks due to their ability to automatically account for complex relationships within the data and their track record of producing accurate predictions in a variety of modeling domains (Breiman, 2001; Chen and Guestrin, 2016). Furthermore, the improvement of methods for interpreting the output of machine learning models has led to a greater ability to understand what is going on under the hood of black-box models, which makes them more transparent and has the potential to improve trustworthiness in implementing these tools in operational settings (Molnar, 2022).

The objective of this paper is to examine and describe the run list coding process at a mechanized skiing operation using BN, RF, and XGB approaches and discuss their potential for the design of operational decision support tools for the mechanized skiing industry. We explore the factors that influence run list decisions and the relationships within the decision-making process. The empirical foundation of the decision-making models is based on seven seasons of operational data (winter 2015/2016–winter 2022/2023) as well as high-resolution avalanche terrain modeling. We test and compare the performance of the decision-making models as predictive tools and use interpretable machine learning methods to understand the inner workings of the black-box models. The insights from this study lay the foundation for collaboration with guiding operations to create real-world decision support tools that capture historic decision-making patterns with the potential for integration into guide training and daily operational decision-making practices.

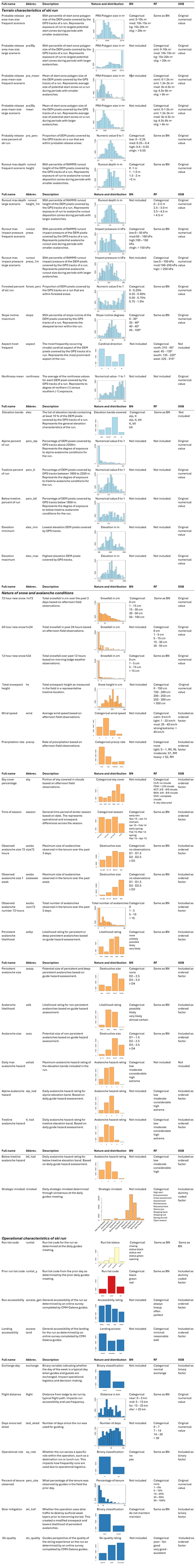

Capturing the critical factors for the run list coding process at an operation requires a variety of different datasets which can be grouped into factors that characterize the terrain within each ski run, current conditions, and operational factors and constraints. This section first introduces the study area and datasets that we used to capture the run list decision-making process. It then discusses the three modeling methods and our approach to model evaluation in detail. A table of all variables included in the decision support models is in Appendix A, including a description of the variable, a histogram of the variable distribution, and how it is applied in each model. The code necessary to reproduce our analysis is available at https://doi.org/10.17605/OSF.IO/6DHMX (Sykes et al., 2024b), and all code is written in the R program for statistical computing (R Core Team, 2024). Due to the large number of data sources and variables included in the present analysis, it is not possible to describe every processing step in complete detail within the constraints of this paper. However, interested readers are encouraged to reach out to the corresponding author for more details.

2.1 Study area

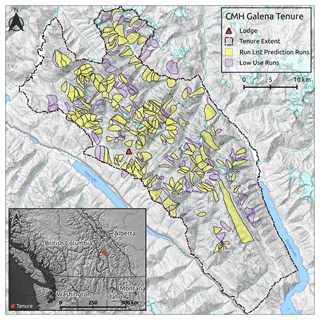

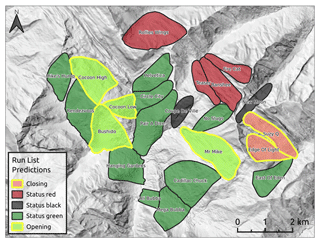

Canadian Mountain Holidays (CMH) Galena Lodge is a mechanized skiing operation located in the Selkirk Mountains near Trout Lake, BC, Canada (Fig. 1). The Selkirk Mountains have a transitional snow climate prone to persistent weak layers of surface hoar and faceted layers associated with icy crusts (Haegeli and McClung, 2007). Most of the terrain in the CMH Galena tenure is forested, but there are also high alpine zones with glaciated ski runs. Within their roughly 1200 km2 tenure are 295 predefined ski runs (Fig. 1), which are individually coded each morning. For this research we only included ski runs that are completely within the operating boundaries of CMH Galena's tenure, where we have collected at least 10 GPS tracks over the study period (see Sect. 2.2.2) and where information about operational considerations was available (see Sect. 2.2.3). This results in an analysis dataset for 192 ski runs, which are highlighted in yellow in Fig. 1.

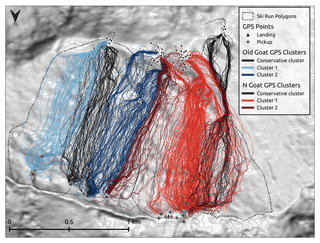

2.2 Run characteristics

To characterize the terrain in the CMH Galena tenure, we modeled avalanche start zones and runout zones using state-of-the-art avalanche terrain modeling methods including high-resolution satellite stereo photogrammetry, potential avalanche release area (PRA) modeling, and RAMMS dynamic avalanche simulations (Sykes et al., 2022). The output of these models describes the terrain across the entire tenure, but to better understand the characteristics of the terrain where guides commonly travel, we focused on the characteristics of raster pixels within a 20 m buffer of GPS tracks that have been collected by the research team to record guides' terrain choices since the 2015/2016 winter season. Based on discussions with guides, we learned that guides only consider the “most conservative line” within the run during the run list coding process. Therefore on runs that are heavily used, we applied a clustering approach to identify the most conservative ski line from the collected GPS tracks and only extracted terrain characteristics from GPS tracks belonging to the most conservative cluster. To include the terrain characteristics in our analysis, we calculated summary statistics to represent the terrain on each ski run. In addition to the physical terrain characteristics, we also included operational factors for each ski run that play a role in the decision-making process. The following paragraphs explain each of these steps in more detail.

2.2.1 Avalanche terrain modeling

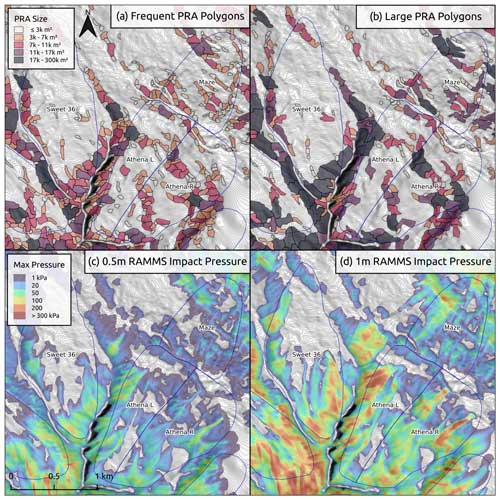

The data we used to characterize avalanche terrain at CMH Galena include elevation, forest cover, exposure to potential avalanche release areas (PRAs), and avalanche runout zones. Elevation data came from a SPOT 6 satellite stereophotogrammetry 5 m DEM, and forest cover was estimated using land cover classification of RapidEye 5 m satellite imagery (Sykes et al., 2022). We used a PRA model to estimate the extent and size of avalanche start zones based on slope angle, aspect, curvature, roughness, and forest density (Bühler et al., 2013, 2018, 2022; Sykes et al., 2022). To quantify exposure to overhead hazard, we used the large-scale hazard indication modeling approach described by Bühler et al. (2022) including the avalanche dynamics model RAMMS (Christen et al., 2010) to simulate the runout distance, velocity, impact pressure, and flow height for avalanches originating from all 111 937 identified PRA polygons. The runout impact pressure was used to estimate the potential size of avalanches, and flow height was used to estimate areas where skiers could potentially be buried deeply, commonly known as terrain traps.

Figure 2Comparison of PRA polygons (a, b) and runout impact pressure (c, d) for frequent (a, c) and large (b, d) runout simulations. The frequent PRA and impact pressure simulations represent smaller, storm slab avalanches, whereas the large PRA and impact simulations represent deeper, more connected persistent weak layer avalanches.

We simulated PRAs and overhead hazard for two different avalanche scenarios (Fig. 2): a frequent scenario targeting smaller storm snow slab avalanches that are commonly encountered (Sykes et al., 2022) and a large scenario that is intended to capture deeper and more connected avalanches that are more typical of periods with active persistent weak layers. For the frequent scenario we use the 10-year return period parameters to identify potential release area polygons, and we used the 30-year parameters from prior research conducted by Bühler et al. (2022) to increase the size for the large scenario. The size of PRAs is typically larger for the large avalanche scenario, but the extent to which the release area polygons differ between the two scenarios depends on the local terrain characteristics. For the RAMMS simulations, we used release depths of 0.5 and 1 m for the frequent and large scenarios respectively. These release depth values are based on discussions with local guides and are targeted at the type of avalanche activity we aim to capture with the simulations.

2.2.2 GPS tracking

Starting in the winter of 2015/2016, the Simon Fraser University Avalanche Research Program has collaborated with several mechanized ski guiding operations in western Canada to collect high-resolution information on the terrain skied. The location information was collected with custom-designed GPS trackers which recorded participating guides' positions every 4 s over the course of a week (Thumlert and Haegeli, 2018). At CMH Galena, the research team has collected 15 111 GPS tracks over seven winter seasons (2020/2021 season is missing due to COVID-19 restrictions).

Figure 3Example of GPS clustering approach, with the most conservative clusters of GPS tracks shown with black lines and other GPS track clusters shown by color-coded lines.

We leverage the GPS tracking data in our run list decision-making model by using the GPS track coordinates to extract terrain characteristics for each run. This method is more accurate than using the predefined run polygons (Fig. 3) because it focuses the spatial extent of the terrain characterization on only the portion of the run polygon that is actually skied. Since the most conservative ski line matters the most for opening or closing a run, we used a clustering approach to further refine the portion of the run that we use to characterize the terrain on heavily used runs, which we defined as having 50 or more GPS tracks over the data collection period (n = 65).

To identify the most conservative line within the available GPS tracks associated with a ski run polygon, we grouped the tracks using fuzzy analysis clustering, a probabilistic variant of the k-medoids clustering approach described in Chap. 4 of Kaufman and Rousseeuw (2005) and implemented in the fanny function of the cluster package in R (Maechler et al., 2022). In comparison to hard or deterministic clustering, fuzzy clustering calculates membership probabilities for each data point to describe how likely they belong to a particular cluster. This allows the method to provide better insight into datasets where the differences between clusters are more gradual (Kaufman and Rousseeuw, 2005). The distance matrix used for the clustering was a combination of two normalized distance matrices: one for the geographic location represented by the start and end point of the GPS tracks (i.e., coordinates of landing and pickup locations) and one for the terrain characteristics of the tracks, which included the 95th percentiles of slope incline, PRA polygon area for the frequent and large scenarios, runout pressure for the frequent and large scenarios, and the proportion of the track in forested terrain. Terrain characteristics that likely did not exhibit a multimodal distribution (as tested with Hartigan's dip test from the diptest R package by Maechler, 2021) were eliminated from the terrain characteristics distance matrix. Based on our initial explorations, the default values for the weight of the terrain characteristics in the overall distance matrix and the fuzzy parameter that determines the crispness of the cluster solutions were 0.15 and 1.7 respectively. For each ski run, we calculated solutions for 2 to 10 clusters and selected the best solution based on the average silhouette width, one of the commonly used measures for assessing how well the data points are represented by their clusters. Subsequently, the most conservative line within the selected cluster solution was identified by examining the distributions of the terrain characteristics of DEM raster cells touched by the GPS tracks associated with the different clusters. To minimize the influence of outliers, only GPS tracks with a cluster membership probability higher than 0.75 were included in these assessments. The selected cluster solutions and most conservative lines were verified by CMH Galena guides, and if necessary, the algorithm was rerun with slightly modified parameter values to produce a more realistic solution. Figure 3 presents the identified ski lines for several runs to illustrate the output of the clustering algorithm.

2.2.3 Summarizing terrain characteristics

Since the unit of decision-making for the run list coding is an individual ski run, we needed to simplify the terrain information for each run into a single number summary for each terrain characteristic. We carried this out by extracting the mean, median, 95th percentile, and maximum values for PRA polygon area, runout depth, runout impact pressure, runout velocity, and slope incline based on the values of all raster cells within a 20 m buffer of the relevant GPS tracks. For the PRA and runout terrain layers we calculated these summaries for the output of both the frequent and large avalanche scenarios. In addition, we calculated the percentage of each run that was covered by PRA polygons, covered by forest, and the proportion of each run within each cardinal aspect. To further characterize the aspect of the runs we calculated the average northness of each run, which uses a cosine transformation to determine the degree of northern exposure of a run ranging from −1 (south) to 1 (north) (Olaya, 2009). Since separate avalanche hazard assessments are produced for alpine, treeline, and below-treeline areas, we also calculated the percentage of each run in the different elevation bands using elevation thresholds from local guides (alpine > 2250 m, treeline 2250–1850 m, below treeline < 1850 m). Finally, we extracted the maximum and minimum elevation of each run.

2.2.4 Operational considerations

The final component of run characterization aims to capture the operational perspective of each run. One key source of this information was the work of Wakefield (2019), who developed a ski run characterization survey to capture the guides' personal perception and operational knowledge of their skiing terrain. The majority of the survey data is categorical, and the guide characterizations are based on “typical” conditions for each run. The collected information contains a wealth of knowledge from CMH guides, but the present study only incorporates a limited subset including (a) whether weak layers are intentionally managed by destroying them on the surface using skier traffic, (b) whether a run fills a specific operational role (e.g., lunch run or destination run), (c) the approximate flight distance required to access a ski run, (d) the overall ski quality of the run, and (e) the overall accessibility of the run and the landing zone for each run.

2.3 Current conditions

There is a multitude of condition factors that can impact run list coding at CMH Galena, but in this paper we present a relatively sparse model that focuses on the major decision drivers. We selected the variables based on the operational experience of Roger Atkins, a long-time guide at CMH Galena, and looked for relationships within the data. In the absence of high-quality weather station data in our study area, we relied on field observations, lodge weather observations, and daily avalanche hazard assessments to capture the current conditions. We also included the following daily-changing operational factors relevant to the daily run list coding: (a) the percent of the tenure that was observed on the prior day and (b) how long it had been since the run had been skied last. In addition, we included (c) whether the guiding program was on a weekly exchange day. When guests change, guide teams are swapped out, and operational logistics such as transporting food and equipment to the lodge are handled, which impact daily operations. These factors, which are independent from the terrain hazard or avalanche hazard conditions, help to incorporate real-world operational considerations that have an impact on the decision-making process. The following sections describe how the condition variables were derived in detail.

2.3.1 Weather conditions

Snowfall loading rates are some of the most critical factors to determine the size and likelihood of avalanches. Therefore, we included three variables related to snow loading in our decision-making models: the height of new snow over the past 72 h (hn72), 24 h (hn24), and 12 h (h2d) as recorded in the daily field observations and morning lodge observations from the guiding team. We also included the daily average height of snow (hs) observed in the field as a proxy for the overall snow coverage in the tenure. Additional weather factors captured from guides' field observations include wind speed, sky cover, and current precipitation rate. As an indicator of seasonal changes to operational practices and general mountain conditions, we also included the time of season as an ordinal variable (early winter, mid-winter, early spring, and spring).

2.3.2 Avalanche conditions

To capture the guiding team's understanding of the avalanche hazard conditions, we extracted daily avalanche hazard ratings for each elevation band, avalanche likelihood and avalanche size information of the identified avalanche problems, and the strategic mindset from their morning assessments (Atkins, 2014; Statham et al., 2010, 2018). Since recent avalanche observations play a large role in avalanche hazard assessment, we also included the total number and maximum size of the avalanches observed within the CMH Galena tenure from the past 72 h and the past week. We elected to separate avalanche likelihood and size information for persistent and non-persistent avalanche problems to capture the effect of different types of avalanche problems on the decision-making process more precisely.

2.3.3 Run coding

The daily run list codes are the output variable for our decision-making models. At CMH Galena the conditionally open (yellow) run code is rarely used; therefore we elected not to include that run code in our analysis. While working with Roger Atkins to understand the CMH Galena run list coding process, we realized that transition periods are the most interesting and useful target for a decision-making model as they indicate a change in operational conditions from the status quo. However, these transition periods are relatively infrequent, only accounting for roughly 11 % of the run list codes in our dataset, while runs remain red in roughly 18 %, remain green in roughly 59 %, and remain black in roughly 12 % of run list codes. To maximize the utility of the decision support tools we constructed our target variable to explicitly highlight transitions from the prior day's run code. The run list target variable in our models includes five categories: “closing”, “status black”, “status red”, “status green”, and “opening”. We consider runs transitioning from “green” to either “red” or “black” as the run “closing”. Conversely, anytime a run transitions from “red” or “black” to “green”, we consider it “opening”.

We also included the run list coding from the prior day as a variable in our decision-making models. This captures the iterative nature of the run coding, where codes are updated daily based on prior observations and new information. Including the prior run list code as an anchor for the daily run list coding is realistic to the real-world decision-making process and allows us to explicitly identify periods of transition within the run coding.

2.4 Model development and evaluation

We tested three different statistical models to develop decision-support tools for the run list coding process: a Bayesian network (BN), random forest (RF), and extreme gradient boosting (XGB). The BN approach has the advantage of being explicitly grounded in expert understanding of the decision-making process. We selected the random forest (RF) model because it is a commonly used model across a variety of domains, including other applications in the avalanche field (Mayer et al., 2022; Richter et al., 2023), and extreme gradient boosting (XGB) because it is a well-known state-of-the-art model with high predictive performance (Chen and Guestrin, 2016).

2.4.1 Bayesian network

Bayesian networks (BNs), also known as belief networks or probabilistic graphical models, are a type of statistical model that are used to represent and analyze uncertain complex relationships among multiple variables that include both inputs and outputs (Scutari and Denis, 2021). The foundation of a BN is a directed acyclic graph (DAG), which illustrates variables as nodes and relationships between them as arcs. The graphical structure of a BN cannot contain any cycles, and nodes that are not connected by an arc are assumed to be conditionally independent given their parents (Fenton and Neil, 2019; Scutari and Denis, 2021). One major advantage of BNs over other types of modern machine learning algorithms is that the structure of the network can be constructed based on input from domain experts, which allows the network to take on a form which is authentic to real-world decision-making thought processes.

The quantitative foundation of BNs is conditional probability tables (CPTs), which can be estimated manually or based on observed data for each node. BNs have been applied in a variety of fields, including medical diagnosis and operational risk analysis (Fenton and Neil, 2019). Once a BN has been estimated, it can be used for a variety of tasks related to probabilistic inference, prediction, and decision support, which make BNs well-suited to our task. In this analysis we used the R packages bnlearn (Scutari, 2010) and gRain (Højsgaard, 2012) to fit and apply the BN.

The main driver for deciding what nodes to include in the BN and how to set arcs between nodes was the expert opinion of Roger Atkins. The primary objective of this step was to capture realistic patterns of decision-making in the arc pathways within the DAG of the BN. We then used the data described in the previous section to calculate the conditional probability distributions of the BN based on the structure provided by the domain expert.

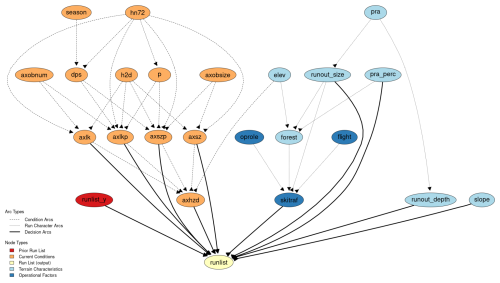

Figure 4Directed acyclic graph (DAG) for run list BN at CMH Galena. Arcs are defined based on the expert opinion of our collaborating guide as well as natural physical relationships of avalanche terrain characteristics. Node abbreviations and variable characteristics are described in detail in Appendix A.

We constructed the DAG based on the thought process of using three different types of relationships to set arcs (Fig. 4). First are arcs between run characteristic nodes, which represent the natural physical relationships in avalanche terrain and operational relationships in the guide survey nodes. Second are arcs between condition variables, which represent the relationships between observations and guides' assessments, which are roughly modeled after the theoretical foundation of the conceptual model of avalanche hazard (Statham et al., 2018). Third are decision arcs that connect nodes that could have a direct impact on how a run is coded.

To reduce the complexity of building the BN and make it easier to understand, we used categorical variables for all the nodes in the network. This required converting the numeric variables into categorical variables, which we undertook manually, aiming to minimize the number of categories with very small proportions of the data to reduce the overall size of the conditional probability table for the run list output node. Reducing the number of categories in each variable significantly reduces the computer processing time to apply the BN in a predictive capacity and leads to more accurate predictions. See Appendix A for a list of all variables and to compare the original numeric distribution to the categorized version of the variables.

2.4.2 Machine learning approaches

Both machine learning approaches are based on decision trees but differ in their specific implementation. A decision tree is a common statistical approach to classification where a simple tree structure is built to split data into leaves or nodes based on a training dataset that includes both feature values and the desired classification. The internal nodes of a decision tree represent a test on an individual feature in the data, with the branches descending from each internal node representing the outcome of the test (Breiman, 2001). The terminal nodes, or leaves of the tree, represent the classification prediction. One of the main advantages of decision trees is that they automatically detect relationships within the data and naturally handle interactions among features without the analyst needing to a priory specify them (Kuhn and Johnson, 2013). However, when applied to predictive problems, individual decision trees tend to overfit the sample observations, which means they do not tend to generalize well to observations outside of the training dataset.

Random forest (RF) is an ensemble-based machine learning approach which uses hundreds of independent decision trees to produce more accurate and generalizable predictions. Independent decision trees are fit by using a random subsample of the training data, using a process called “bagging”, and the feature used at each node within the tree is selected from a random subset of all the features available. These practices allow the individual trees within the RF to be substantially different from one another, which improves overall performance of the ensemble (Breiman, 2001). A majority voting scheme is used to determine the prediction of the RF, which means that whichever classification level gets the most votes of all the trees becomes the overall prediction.

While RF uses bagging and random sampling to create a forest of independent trees, extreme gradient boosting (XGB) uses a technique called “gradient boosting” to sequentially build decision trees that correct the errors made by previous trees (Chen and Guestrin, 2016). Gradient boosting creates an ensemble of simple weak learners, defined as a simple classifier with performance slightly better than random chance, to form a strong learner, defined as a classifier that achieves arbitrarily good accuracy, by optimizing a loss function when each new tree is fit. Essentially each subsequent weak learner in the ensemble focuses on the misclassified cases from prior weak learners to focus training on cases the model previously got wrong. This method allows XGB to produce classification models with reduced bias and variance, which leads to better predictive performance. Compared to RF, XGB tends to build more complex models that capture more nuanced patterns in the data. Fitting XGB models typically requires more computer processing time and tuning of several model parameters to achieve optimal results.

To fit the machine learning models to our dataset we included all variables from the BN and added additional variables related to the run list decision-making process as determined by conversations with an expert guide, and we evaluated whether they were improving predictive performance. While the RF model easily adopted the categorical variables that we developed for the BN approach, we manually converted the categorical variables into a series of binary variables (also known as one-hot or dummy encoding) before including them in the XGB method using the R package fastDummies (Kaplan, 2020). To ease interpretation of the XGB model we elected to use the native numeric representations of the run characteristic and condition variables where possible. In addition, we tested treating ordered factors as both dummy-encoded variables and as ordered integers in the XGB model.

To tune the RF model parameters, we performed a grid search on the “mtry” parameter, which determines how many variables are randomly sampled at each split in the decision trees using the R packages caret (Kuhn, 2008) and RandomForest (Liaw and Wiener, 2002). For the XGB model we used the “gbtree” booster and carried out a more extensive process of tuning the “nrounds”, “eta”, “gamma”, “max_ depth”, “subsample”, “colsample_bytree”, and “min_child_ weight” model parameters using the R packages caret (Kuhn, 2008) and XGBoost (Chen and Guestrin, 2016). We aimed to optimize overall accuracy and used the default “softmax” objective function from the summary function “multiClassSummary”. Our process for tuning the XGB model parameters required five steps: (1) roughly tune “nrounds”, “eta”, and “max_depth” while limiting the max value of “nrounds” to 1000 to limit processing time of the tuning procedure and using default values for other parameters; (2) tune “max_depth” and “min_child_weight” using “nrounds” values from 50 to 1000 using tuned “eta” values from round 1 and defaults for other parameters; (3) tune “colsample_bytree” and “subsample” using tuned parameters for “eta”, “max_depth”, and “min_child_weight” while using a default parameter for “gamma”; (4) tune “gamma” using “nrounds” values from 50 to 1000 and tuned parameters for all other values; and finally (5) tune “eta” and “nrounds” a second time using tuned parameters for all other inputs and testing a larger range of “nrounds” values from 100 to 5000. This process is intended to sequentially tune parameters in batches to limit computer processing time while still testing a wide range of potential parameter combinations.

We tested both the RF and XGB models with and without class weights, which are intended to improve accuracy for imbalanced classification tasks. We used a class weight scheme based on the inverse proportion of the sample size so that errors in classes with lower sample sizes are penalized more heavily than classes with larger sample sizes.

2.4.3 Model evaluation

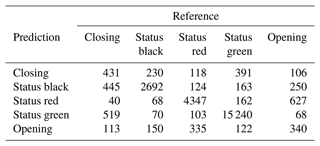

To assess how well our decision-making models match real-world decisions, we used each model as a predictive tool to classify whether runs would be coded as “closing”, “status black”, “status red”, “status green”, or “opening” based on run characteristics and current conditions. We used 70 % of our run list dataset to train the models and 30 % to test the prediction accuracy. To compare the models, we used a multiclass confusion matrix. Specifically, we examined the overall accuracy and Cohen's kappa, which are metrics for the overall performance of the classifier, and the sensitivity of individual classes to evaluate performance in greater detail. The overall accuracy is the proportion of cases where the model predicts the same run list code as the CMH guides. Cohen's kappa measures how well the classifier performs compared to a model that simply predicted the most frequent class, also known as the “no information rate”. In addition to the confusion matrix approach, we calculated the area under the receiver operating curve (AUROC) for each model using the R package pROC (Robin et al., 2011). AUROC considers the sensitivity and specificity of the model and evaluates the overall performance of the classifier, with an AUROC of 0.5 indicating random chance and 1.0 indicating a perfect classifier. Since our output variable has multiple classes, the AUROC is calculated using a one-versus-one approach, with each class considered as the positive case and compared against every possible pairwise combination of classes. The model AUROC is then calculated as the average of the pairwise AUROC values (Hand and Till, 2001; Robin et al., 2011).

To better understand the patterns captured in the RF and XGB models, we also looked at the feature importance for these models. Feature importance provides an assessment of which variables contribute to the classification task most strongly, which is determined by the mean decrease in the Gini coefficient (Breiman, 2001). This measures how much each feature contributes to the homogeneity of the nodes in the decision trees, which leads to more accurate classification. To dig deeper into understanding the relative contribution of different features, we created Shapley additive explanation (SHAP) plots, which are a more advanced method for interpreting black-box machine learning models (Lundberg and Lee, 2017; Molnar, 2022). In addition to measuring which features strongly contribute to the classification task, SHAP plots also show how the range of values for each feature contributes to the classification (Flora et al., 2024). SHAP plots can be calculated for both the overall model and the individual levels of the classification. These methods help to visualize how the machine learning models create their classifications and provide some insight into the patterns that drive these black-box models.

3.1 Bayesian network

Our decision-making network aimed at capturing the daily run list coding at CMH Galena contains 24 nodes and 44 arcs (Fig. 4). To fit the BN, we used 63 581 cases to train the network and kept 27 254 cases to test the accuracy of the BN. Overall, the network structure represents the complexity of the decision-making process by containing many potential pathways to the run list node. This realistically represents the real-world decision-making process, where the driving factor for the coding of runs depends on a multitude of factors related to current conditions and run-specific characteristics.

3.1.1 Input nodes – terrain characteristics and operational factors

We included seven nodes in the BN that represent terrain characteristics from the avalanche terrain model output (light blue nodes). Potential avalanche release area size (pra) represents the 95th percentile start zone polygon size for the frequent scenario within each ski run, which is categorized into four classes (0–10 000, 10 000–15 000, 15 000–20 000, > 20 000 m2). PRA size is aimed at capturing the high end of the distribution of avalanche release areas that could be triggered on the run. The percentage of the ski run that is within potential avalanche release areas (pra_perc) intends to capture the overall amount of exposure to areas where avalanches could be triggered along the run (0 %–25 %, 25 %–40 %, 40 %–55 %, 55 %–100 %). Runout size (runout_size) represents the 95th percentile impact pressure from the large avalanche simulation, which was included to represent the high-end potential avalanche runout size, or overhead hazard, during periods when large avalanches are possible (0–50, 50–100, 100–150, > 150 kPa). Runout depth (runout_depth) is determined by taking the 95th percentile of the runout height for the frequent avalanche scenario, which captures the potential of terrain traps to cause deep burial in the case of a relatively small human-triggered avalanche (0–1, 1–1.5, 1.5–2, > 2 m). The node for run steepness (slope) represents the steepest portion of each run by using the 95th percentile of its slope angle distribution, which is then categorized into four classes (0–35, 35–40, 40–45, > 45°). We chose to use the 95th percentile value to capture the upper end of the distribution for PRA size, slope angle, runout size, and runout depth instead of the maximum value to minimize the potential for local DEM artifacts to give unrealistically high maximum values. To represent the elevation (elev) of a run we used all the elevation bands a run includes, so runs that cover multiple elevation bands include multiple elevation bands (alpine–treeline, alpine–treeline–below-treeline, treeline–below-treeline, below treeline). Forest cover (forest) was summarized based on the percentage of raster cells within 20 m of GPS tracks that are forested and split into categories of 0 %–25 %, 25 %–50 %, 50 %–75 %, or 75 %–100 %.

There are several inherent correlations among the run characteristics that need to be accounted for in the model with arcs. PRA size is connected by arcs to runout size and runout depth because the surface area of the start zone has a strong impact on potential avalanche size and burial depth. PRA percent is connected by an arc to forest cover percent (forest) because avalanche start zones tend to inhibit the growth of forests. Elevation band (elev) and runout size also have an arc connected to forest because both large avalanche paths and higher elevations inhibit the growth of forests.

The operational factors included in the BN (dark blue nodes) are whether skier traffic is used to mitigate weak layer development (skitraf), the flight distance from the lodge to the run (flight), and whether the run serves a specific operational role (oprole). The nodes oprole and flight both have arcs connecting to skier traffic because runs that are maintained with skier traffic tend to be in closer proximity to the lodge and serve a specific operational role because they can typically be used during periods of elevated avalanche hazard. The skier traffic node also has input arcs from forest and runout_size because forest cover can break up potential avalanche start zones into multiple smaller start zones, which are more suitable for this type of mitigation, and runs with exposure to large overhead avalanche paths are typically not suitable for skier mitigation.

3.1.2 Input nodes – current conditions

Twelve nodes are included in the BN model to represent current avalanche conditions (orange nodes). These nodes include both direct observations and guide assessments of the conditions. The relationships among these condition variables are driven by physical principles and the avalanche hazard assessment process described by the conceptual model of avalanche hazard (Statham et al., 2018). The primary weather condition variables in the BN are the height of new snow within 72 h (hn72) and the height of new snow within 12 h (h2d). These nodes are included in the model to represent the amount of new snow loading within the current storm and overnight respectively and naturally have arcs connected to non-persistent avalanche size (axsz), non-persistent avalanche likelihood (axlk), size of persistent avalanches (axszp), and likelihood of persistent avalanches (axlkp). In addition, hn72 has arcs connected to the status of persistent (p) and deep persistent (dps) avalanche problems, which have values of 0 when the avalanche problem is not active and 1 when active. Time of season (season) is a secondary condition variable that is oriented towards the development of snowpack characteristics over the course of a winter season. Season is connected to the status of deep persistent avalanche problems, which tends to be less likely in the early winter and more likely in the mid-winter, early spring, and spring. The number of avalanche observations (axobnum) and maximum size of avalanche observations (axobsize) within 72 h from the guides' field observations represent their direct evidence of current avalanche activity. There are arcs connecting observed avalanche size to expected avalanche size for persistent and non-persistent avalanche problems, as well as from the number of observed avalanches to expected avalanche likelihood for both persistent and non-persistent avalanche problems. The statuses of persistent and deep persistent avalanche problems are each connected with arcs to persistent avalanche likelihood and size. As described in the conceptual model of avalanche hazard (Statham et al., 2018), avalanche size and likelihood nodes for both persistent and non-persistent avalanche problems have arcs to daily maximum avalanche danger rating (axhzd), as they are the key determining factors of avalanche hazard. Since the daily maximum avalanche danger rating is specific to the elevation bands included in each run, an arc connects elevation band to avalanche hazard.

3.1.3 Output node

The target output node is run list, which captures the change in the run list status from the prior day. This node has input arcs from avalanche size, avalanche likelihood, persistent avalanche size, persistent avalanche likelihood, avalanche hazard, runout size, runout depth, percent of PRA, slope angle, skier traffic mitigation, and the run list status from the prior day (runlist_y). By combining condition variables, run characteristics, and prior status, we aimed to capture the interactions between the range of potential factors that drive the run coding decisions for different types of runs.

3.1.4 BN performance

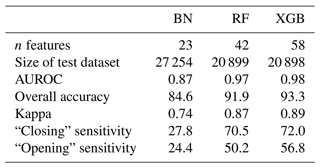

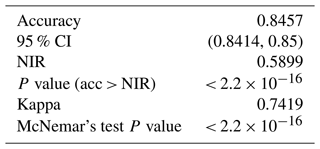

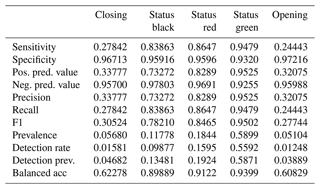

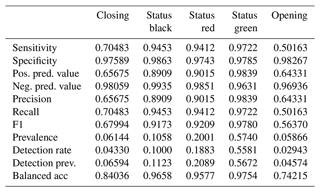

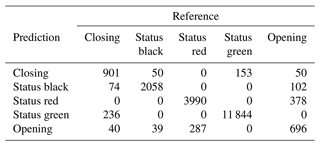

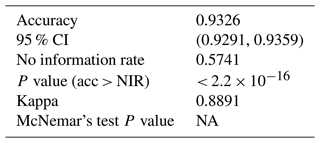

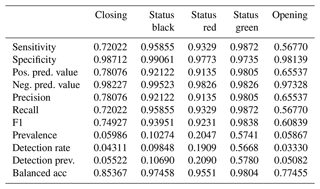

The BN has an overall accuracy of 84.6 % compared to the test cases with an area under the receiver operating curve (AUROC) of 0.87 and a kappa statistic of 0.74 (Table 1). The no information rate for the BN sample is 59.0 %, which is the class frequency of “status green”. For the transition classes “closing” and “opening” the BN has a sensitivity of 27.8 % and 24.4 % respectively. For complete results of the confusion matrix for the BN model, see Appendix B. The BN fitting process does not include a method for class weighting. However, as an alternative to prioritize the performance of the transition classes we tested manually setting the classification threshold for “closing” and “opening” to 25 % instead of simply selecting the class with the highest probability. We found that the performance in transition classes improved substantially from 27.8 % to 40.5 % for “closing” and 24.4 % to 34.7 % for “opening”. However, manually setting the classification threshold to improve sensitivity for transition cases results in a decrease in overall accuracy and Cohen's kappa, from 84.6 % to 81.7 % and 0.74 to 0.70 respectively.

3.2 Random forest

3.2.1 Features included

Due to missing data in the additional features for the RF, the overall dataset was slightly smaller than the BN, with 48 755 cases in the training dataset and 20 899 in the testing dataset. The final set of features was tested by trial and error and evaluated against the accuracy metrics from the testing dataset. Our grid search for tuning the “mtry” parameter resulted in a value of 9, which means that nine features were randomly selected and tested at each split while growing the decision trees. We used the default value of 500 for the number of trees in the RF. To account for the imbalance in the run list classification target variable, we used inverse proportional weighting to penalize errors in the minority classes more heavily while training the model. This improved performance for the transition periods closing and opening, which makes the model more useful as an operational decision support tool.

The additional terrain characteristics included in the RF model are categorical versions of the 95th percentile PRA size for the large avalanche scenario (pra30y), average PRA size along the run for the frequent (pra_mean) and large scenarios (pra30y_mean), 95th percentile runout pressure for the frequent scenario (runout_press), 95th percentile runout height for the large scenario (runout_height_1m), and the aspect (aspect) of the run with the highest proportion of raster cells within 20 m of GPS tracks. These additional features help to capture the unique characteristics of each run based on the exposure to avalanche start zones and runout zones.

We also added several additional operational factors to the RF model to help capture some of the more nuanced operational considerations that can impact run list coding. Those features are the number of days since the run was last skied (last_skied), the overall quality of skiing on the run (ski_quality), overall accessibility of the run (access_gen), accessibility of the landing zone (access_land), and whether there was an exchange of guests or guides (exchange) taking place that could impact operational logistics.

To capture the current conditions in more detail, we also added additional features to the RF model focused on weather conditions, field observations, and avalanche hazard assessment. The weather condition features we added are the height of new snow in the past 24 h (hn24), the wind speed observed from the field (wind), sky cover observed from the lodge in the morning (sky), and the current precipitation rate from the lodge in the morning (precip). Additional field observations include the total snowpack height (hs) observed in the field on the day prior, the percent of the tenure observed on the prior day (perc_observed), and the maximum size of avalanche observations over the past week (axobs_sizeweek). We also replaced the daily max avalanche hazard feature from the BN with avalanche hazard ratings for each elevation band (alpine – alp_hzd, treeline – tl_hzd, below treeline – btl_hzd). To capture the shared mindset of the guiding team, we included the strategic mindset (mindset) as a feature for the RF model. Finally, we removed the status of deep persistent avalanche problem and persistent avalanche problem from the RF model because the persistent likelihood and size features capture this information implicitly, and the overall accuracy of the RF decreased with these avalanche problem features included.

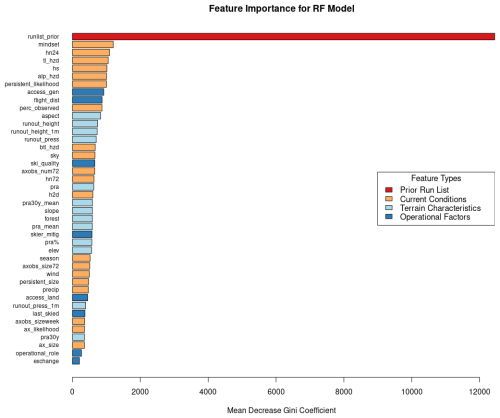

3.2.2 Feature importance

The feature with by far the highest feature importance for the RF model is the run list from the prior day, which naturally emerges from the fact that in roughly 90 % of cases the run list code stays the same as the prior day (Fig. 5). Features 2 through 7 by feature importance are all related to current conditions, with the most important features being strategic mindset, new snow in past 24 h, treeline hazard rating, overall height of snow, alpine hazard rating, and likelihood of persistent avalanches. The below-treeline hazard rating is also ranked relatively highly in 15th. The remaining features that capture snow loading, 3 d snow loading (hn72) and 12 h snow loading (h2d), are ranked 19th and 21st by feature importance. The avalanche observation features that are most important are the percent of the tenure observed on the prior day (perc_observed) and total number of avalanches observed over a 3 d period (axobs_num72), which are ranked 10th and 18th.

Figure 5Feature importance for RF model for all classes of the run list output variable color-coded by the feature type.

Operational features with the highest feature importance are overall accessibility of the run (access_gen) ranked 8th and flight distance (flight_dist) in 9th. Other highly ranked operational features are the quality of the skiing experience on the run (ski_quality) in 17th and whether a run is maintained by skier traffic (skier_mitig) in 26th. The least important features in the RF model are operational role (op_role) in 41st, which designates runs as having a specific value to operational logistics beyond physical characteristics, and whether the guiding program is on an exchange day (exchange) in 42nd.

The terrain characteristics that are ranked highest by feature importance occupy positions 11 through 14 and are the aspect of the run (aspect), runout height for the frequent scenario (runout_height), runout height for the large scenario (runout_height_1m), and runout pressure for the frequent scenario (runout_press). Features related to PRA are generally ranked lower compared to those runout features, with the most important PRA features being PRA size for the frequent scenario (pra) ranked 20th, mean size of PRA for the large scenario (pra30y_mean) ranked 22nd, mean size of PRA for the frequent scenario (pra_mean) ranked 25th, and percent of raster cells in PRA polygon areas (pra %) ranked 27th.

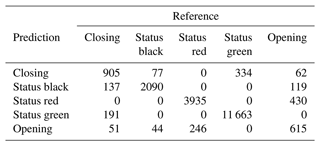

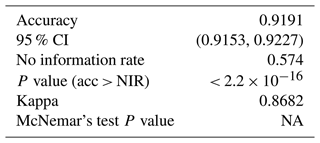

3.2.3 RF performance

The RF overall accuracy is 91.9 % with an AUROC of 0.97 and a kappa statistic of 0.87. The no information rate for the RF dataset is 57.4 %, which is the class frequency of status green. The improvement in predictive performance of the RF model over the BN is 7.3 percentage points in overall accuracy with 0.10 for AUROC and 0.13 for kappa. The sensitivity for the “closing” and “opening” classes for the RF is 70.5 % and 50.2 % respectively, which is an improvement of 42.7 and 25.8 percentage points respectively compared to the BN with default classification thresholds. For complete results of the confusion matrix for the RF model see Appendix B. Tuning the RF model without class weighting resulted in a higher overall accuracy by 0.8 percentage points and a higher Cohen's kappa by 0.01. However, the class-weighted RF has higher sensitivity for the closing and opening classes by 15.1 and 9.7 percentage points respectively.

3.3 Extreme gradient boosting

3.3.1 Features included

For the XGB model we used the same features as the RF. The only features that were dummy-encoded were the categorical strategic mindset and the run list from the day prior. For elevation, we switched from categorizing which elevation bands are part of each run in the RF to calculating the percentage of each run in the alpine (perc_alp), treeline (perc_tl), and below-treeline (perc_btl) elevation bands. We also included the maximum (elev_max) and minimum elevation (elev_min) for each run. To accurately capture the influence of slope aspect we switched from using a categorical variable representing the majority aspect in the RF to calculating the average northness of each run (northness). Sample sizes for the XGB training and testing datasets are almost identical to the RF, with 48 756 cases for training and 20 898 cases for testing. The grid search procedure to optimize the XGB model parameters resulted in “nrounds” of 4400, “eta” of 0.05, “max_depth” of 6, “gamma” of 0.05, “colsample_bytree” of 0.4, “min_child_weight” of 2, and “subsample” of 1.

3.3.2 Feature importance

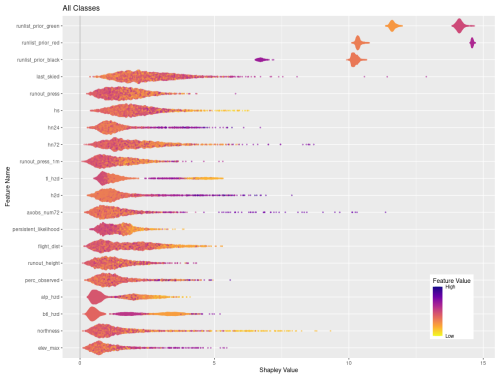

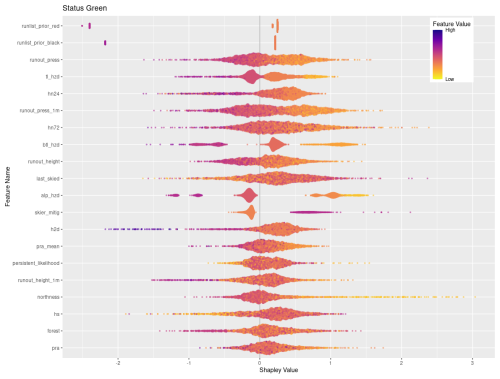

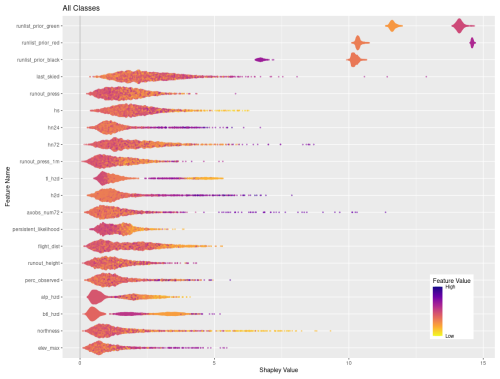

Figures 6 and 7 visualize the feature contributions by plotting the SHAP values for all possible outcomes of the run list target variable (Fig. 6) as well as for the individual transition classes “closing” and “opening” (Fig. 7). The features on the SHAP plots are ordered on the y axis by their feature importance, and the x axis shows the SHAP value. The top three features by feature importance for the overall classification are the dummy-encoded features that represent the status of the run from the prior day. This indicates that the prior day's run list code is the strongest predictor of the current day's run list code, which makes sense since the run list code only changes in roughly 10 % of cases. The points along the x axis for each feature show the distribution of feature values ranging from low (yellow) to high (purple). The distribution of the points is shown by the shape of the bee swarm plot, with a higher density of feature values shown as a thicker section of the point distribution. For the top two features in Fig. 6, prior run list green and prior run list red, we see that high feature values have high SHAP values, which indicates that the prior run list code being green or red (coded as 1 for dummy variables) has a very strong contribution to the run list classification. In contrast the prior run list being black, shown by a high feature value, has a much lower SHAP value. This means that the prior run list being black contributes much less to the run list classification than green or red. Since run list code black is considered a non-decision that can have a variety of reasons unrelated to current conditions or terrain characteristics, it makes sense that this run list code would contribute less to the XGB model predictions.

Figure 6SHAP plot for XGB model with the top 20 features on the y axis ordered by feature importance and x axis showing the SHAP value. The color-coded points show the distribution of the individual features, with hotter colors indicating high feature values and cooler colors indicating low feature values.

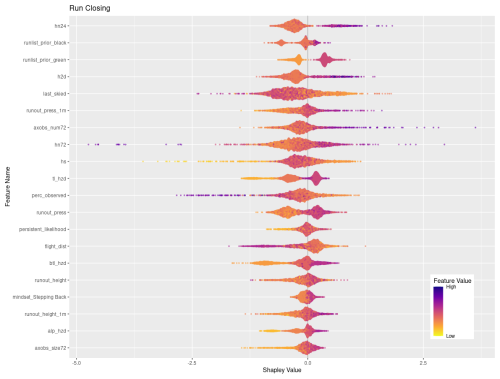

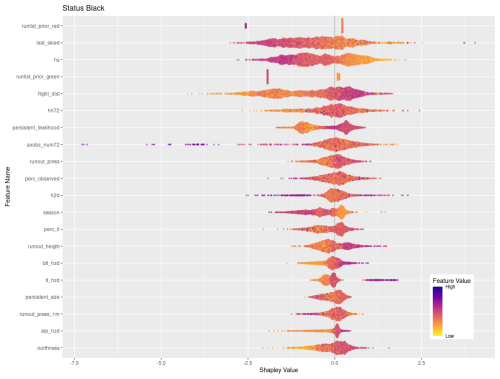

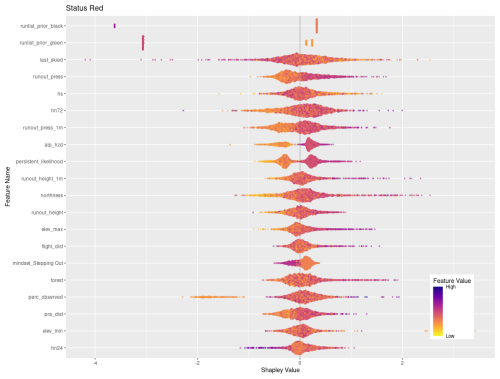

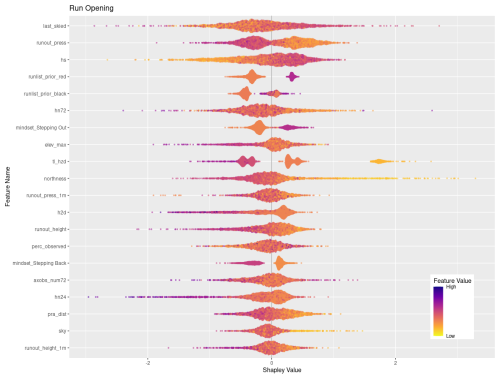

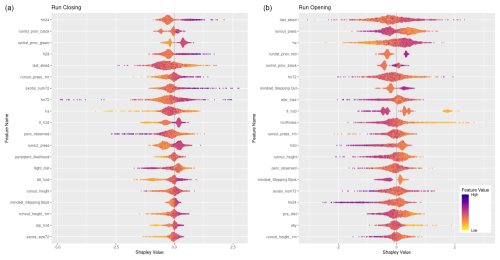

Figure 7SHAP plots for transition classes “closing” and “opening”. Features are ordered on the y axis by their feature importance for each individual class. Negative SHAP values indicate that features are associated with that class being less likely while positive values indicate that the class is more likely. Color-coded points show the relative values of the features with yellows indicating low values and purple high values. Note that the order of features on the y-axis and x-axis range differs for panels (a) and (b). See Figs. C2 and C6 for larger versions of these SHAP plots.

Of the remaining top 20 features shown in Fig. 7 there is a mix of operational factors, terrain characteristics, and current conditions. The operational factors included in the top 20 features are number of days since the run was last skied, flight distance to the run, and percent of tenure observed on the prior day. For both number of days since last skied and percent of terrain observed, higher values make a stronger contribution to the overall classification, whereas lower values of flight distance make a higher contribution to the classification.

The terrain characteristics with the highest contribution to the XGB classification are runout impact pressure from the frequent avalanche scenario, runout impact pressure from the large avalanche scenario, runout height from the frequent avalanche scenario, degree of northness of the run, and the maximum elevation of the run. In general, lower values of runout pressure for the frequent and large scenarios contribute more strongly to the classification, whereas higher values of runout height make a stronger contribution. Runs with low values of northness (i.e., run with southern aspects) make higher contributions, along with runs that start at higher elevations.

A total of 9 out of the 20 top features for the overall classification represent the current conditions. The total snowpack height and all three snow loading features (72, 24, and 12 h) are included. The avalanche hazard rating for all three elevation bands is also included, with a general trend that low or high values make stronger contributions compared to intermediate values. This is intuitive because high avalanche hazard or low avalanche hazard both represent hazard scenarios with greater certainty about current conditions, whereas a wide range of conditions can be observed under moderate or considerable avalanche hazard ratings. High values in the number of avalanche observations in a 72 h period make a strong contribution to the classification. Finally, the avalanche likelihood for persistent slab avalanches shows that the lower values tend to have a stronger impact on the classification.

Feature importance for transition classes

Looking at the SHAP values for closing and opening specifically can provide additional insights into how individual features and feature values contribute to these particular decisions (Fig. 7). To simplify interpretation, we removed the prior run list feature that corresponds to the current run code (i.e., prior run list red for closing class), which has the highest feature importance for both classes since to open or close the run must change the status from the day prior. A total of 15 out of the 20 most important features are shared by both response classes, although the order of importance differs between the two classes. The 15 shared features include a mix of current conditions, terrain characteristics, and operational considerations. In general, the relationship of feature values and SHAP values is reversed for opening versus closing runs. For example, high feature values for the height of new snow in 24 h (hn24) contributes strongly to run closing (the more new snow, the more likely a run gets closed), whereas lower values of hn24 are more important for run opening, as expected. However, the overall importance of hn24 for run openings is much lower than for run closings as indicated by the difference in feature importance (rank 17 versus rank 1).

The additional 5 features that are only included in the top 20 features for run closing are the likelihood of persistent avalanches, flight distance to access the run, below-treeline and alpine avalanche hazard ratings, and maximum avalanche observation size over 72 h. Low feature values of persistent avalanche likelihood align with negative SHAP values, indicating that when persistent avalanches are less likely, runs are less likely to be closed. Longer flight distances appear to also make a negative contribution to runs being closed, which may indicate that runs that are further from the lodge tend to change from open to closed less frequently. Avalanche hazard ratings for all elevation bands have a similar distribution of feature values and SHAP values, with lower ratings leading to fewer runs closing and higher ratings leading to more runs closing. Similarly, field observations of smaller avalanches contribute less to the decision to close runs than observations of larger avalanches.

The features that are only included in the top 20 for run openings are the strategic mindset stepping out, maximum elevation of the run, degree of northness, total distance in PRA, and percentage of sky cover. When the guides' strategic mindset is stepping out, runs are more likely to open, which is unsurprising but also encouraging as their stated mindset corresponds to patterns in their run list practices. Run elevation and aspect seem to contribute more to the decision to open a run for low-elevation and more southerly runs than for more northerly or high-elevation runs. The percentage of a run that is within PRA has the expected effect, with lower values contributing to run openings more heavily. Finally, runs tend to open more when the percentage of sky cover is low. This is likely due to increased access to a larger portion of the CMH Galena tenure due to greater visibility and flying conditions from stable weather. These differences reveal some of the unique patterns identified by the XGB model in the decision-making drivers that impact the run list coding process.

Feature importance for status quo classes

The overall factors that make the largest contribution to runs staying open are their exposure to avalanche runout zones, the overall avalanche hazard conditions, how much recent snow loading has taken place, and whether the runs are maintained using skier traffic. Within the top 20 features by feature importance for status green, there are four different avalanche runout features, with low values in all these features making a strong contribution to runs remaining open (Appendix C). The avalanche hazard ratings for alpine, treeline, and below treeline are also included in the top 20 features, with low values making a strong contribution to runs remaining open. The same can be said for the three snow loading features, where low values contribute more strongly to runs staying open. Other terrain characteristics that contribute to runs staying open are whether they are maintained by skier traffic to mitigate persistent weak layers on the surface, lower values in the mean and 95th percentile PRA size for the frequent avalanche simulation, and runs with southern aspects.

The characteristics of runs that tend to remain closed are the opposite of runs that tend to remain open, with higher runout exposure and an overall higher percentage of PRA. Runs that are further away tend to remain closed more often and so do runs that start at higher elevations and have a more northerly exposure. Conditions that lead to runs remaining closed include higher avalanche hazard ratings in the alpine elevation band and higher likelihood of persistent avalanches. Snow loading over a 72 h period contributes to the decision to keep a run closed more strongly than the 24 h or 12 h snowfall, which plays a more important role in closing a run in the first place.

When a run is coded black it is simply not considered for the day, which is not necessarily an indication that it was deemed unsafe under the current conditions. Instead, there are a wide variety of operational factors that could play into whether a run is discussed during the morning guides' meeting. Our analysis reveals several features with strong contributions to black run codes that seem to have stronger ties to operational decision-making than hazard evaluation. For example, runs that are often skied tend to a make stronger contribution to being coded black, which may be an indication that guides use this code to put frequently used runs on pause during uncertain conditions instead of closing them (Appendix C). This interpretation is further supported by the observation that periods of high avalanche hazard at the treeline and below-treeline elevation bands also contribute strongly to runs being coded black. Similarly, periods of higher likelihood for persistent avalanches tend to contribute to runs being coded black. Other operational considerations that contribute to runs being coded black include the flight distance, with runs further away making a strong contribution to black run codes, as well as the height of snow, with low overall snowpack heights making a strong contribution to black run codes. This pattern is likely related to more runs being coded black at the beginning of the season.

3.3.3 XGB Performance

The XGB model has the highest overall accuracy at 93.3 %, an improvement of 1.4 percentage points over the RF. The AUROC and the kappa for the XGB model are 0.98 and 0.89 respectively, which are improvements of 0.01 for AUROC and 0.02 for kappa over the RF model. The sensitivity of the transition classes for the XGB model is 72.0 % for closing and 56.8 % for opening, an improvement over the RF by 1.5 and 6.6 percentage points respectively. We used the same class weight scheme for the XGB model as the RF model, with weights determined using the inverse proportion of the class frequency. Tuning the model without class weights resulted in a slightly higher overall accuracy by 0.3 percentage points. However, the improvement in sensitivity for the transition classes “closing” and “opening” of 6 percentage points for both classes justifies using the class weights for our application. A subset of the confusion matrix results is presented in Table 1, but interested readers are referred to Appendix C for the complete output.

The objective of this research is to better understand the decision-making process of professional guides in terms of their daily run list coding and develop models that can meaningfully capture this decision-making process to provide decision support by producing run list predictions based on past decisions. In this section we compare the relative strengths and weaknesses of the three different models, discuss the insights that each model provides into the decision-making process, and reflect on potential applications and implications for incorporating this type of predictive model into the real-world decision-making process in mechanized skiing.

4.1 Summary and comparison of models

While the predictive accuracy is clearly much higher for the machine learning models than the BN, there are pros and cons to both approaches. The biggest benefit of the BN is the process of manually constructing the decision-making network by working closely with domain experts to understand the nature of the decision-making process. This collaboration required considerable time and energy to drill down into the details of the decision drivers, but the insights gained from this process benefitted not only the construction of the BN model but also the curation of the datasets and selection of features for the machine learning models. The DAG that forms the foundation of the BN is a beneficial byproduct of this process which helps to visualize the decision-making process and captures the theoretical underpinnings (Fig. 4). In addition, the combination of being based on expert input and having the predicted probabilities calculated as a simple conditional probability of input nodes makes the output of the BN much more transparent and therefore possibly more trustworthy for adoption by practitioners.

Even though the interviews with the domain expert identified many factors that contribute to the decision-making process, the best performing BN was limited to 23 input nodes (Fig. 4). We found that including additional input nodes decreased the predictive performance and significantly increased the computation time required to process the predictions. The increase in processing time is due to the exponential growth of the conditional probability table for the output node as more input nodes are added (Fenton and Neil, 2019). The reason for the predictive accuracy of the BN decreasing when including additional variables is not obvious. Many of the additional variables that we tested in the BN were shown to be strong predictors in the machine learning approaches (e.g., hn24, last_skied). Two potential causes could be that there are strong correlations between these additional input nodes and existing nodes in the DAG or that further increasing the number of arcs directly linked to the output node is creating a much larger conditional probability table causing relatively small sample sizes for each potential combination of input node conditions despite our relatively large overall sample size. Due to this trade-off between computation time and predictive performance with complexity, we manually fit and tested many versions of the BN. To select the final version, we considered both the theoretical accuracy, as determined by our domain expert, and prediction accuracy to arrive at a relatively simple final model. While the BN is a meaningful representation of the high-level decision-making process, the fact that it only includes roughly half as many features compared to the machine learning approaches may prevent the BN from capturing subtle patterns in the decision-making process and therefore contribute to lower overall performance.

Both machine learning approaches performed better than the BN in terms of predictive performance in all accuracy metrics. The advantage of the machine learning models was greatest in the sensitivity of the transition classes closing and opening, with a roughly 2-fold increase in the percentage of cases where the actual run list was a transition class correctly identified. This reveals that the machine learning models are much better at capturing the conditions and terrain characteristics of runs which are likely to transition from closed to open or vice versa. The cause of the machine learning models higher skill in the transition cases is likely due to multiple factors, including the increased number of features in the models, the inclusion of class weights in the model fitting process which intentionally penalizes errors in the transition cases more severely, the ability of decision tree models to naturally integrate all types of interactions between features, and the greater complexity of the machine learning models being able to identify more subtle patterns in the data. Furthermore, the inclusion of strategic mindset likely played a role in the improved performance, while we elected not to include the strategic mindset variable in the BN because we felt it was too much of a high-level summary of the decision-making process. Mindset had a high variable importance for the machine learning approaches, especially for the “stepping out” and “stepping back” mindsets, which explains some of the improved predictive performance during periods when runs were closing and opening. Hence, including strategic mindset in the machine learning approaches allows us to determine the upper limit of predictive accuracy when considering all possible input data.

Between the two machine learning models the XGB showed higher performance across all accuracy metrics compared to the RF, although the improvements were much smaller than the gap between the BN and the machine learning models (Table 1). The largest difference between the RF and XGB was again in the transition classes, specifically the opening class where accuracy improved by 6.6 percentage points over the RF. Since this class has the lowest sensitivity overall, these improvements represent a substantial benefit to model performance. The improvement in accuracy for transition cases in the XGB model is likely due to the boosting approach, which builds an ensemble of decision trees that use misclassified cases to sequentially improve performance. Essentially the model identifies the cases where it is wrong and trains more decision trees to try and improve the fit for those misclassified cases. Through this process the XGB model fitting can focus more training effort on difficult to capture cases and potentially extract more subtle patterns in the decision-making process.

While the XGB model performed best with respect to all predictive accuracy metrics, this improvement comes with a cost from the additional feature engineering to prepare data as well as more complexity and computer resources required to tune model parameters. Finally, both machine learning models are much less transparent than the BN in terms of understanding the pathway to how the models produce their predictions. However, the same techniques for visualizing feature importance and contributions of different features to the classification task can be applied to both.